This episode is real people designed, and AI narrated.

You've probably noticed terms like XR, MR, VR, AR, and Hologram appearing more frequently in educational technology discussions. What does this all mean for your classroom? Is it really important to understand? Popularized by films like Minority Report and made more accessible by recent Apple releases, these technologies fall under the paradigm of spatial computing.

We'll start with a clear definition, explore what it's made of, then explain why it matters for your students' learning.

Spatial computing is an educational approach that integrates immersive technologies to create seamless connections between digital and physical learning environments. It enhances student experiences in STEAM/STEM education, collaborative projects, and hands-on problem-solving.

But spatial computing isn't just the latest tech trend. It's grounded in serious research. In 2003, MIT Media Lab researcher Simon Greenwold defined it as "human interaction with a machine in which the machine retains and manipulates referents to real objects and spaces." [ 1 ] Simply put: it's about technology understanding the physical space where your students learn.

Unlike what you might see in tech advertisements, spatial computing doesn't require expensive headsets. Instead, it relies on four core components, each with various technological implementations:

Depending on your teaching context and learning objectives, spatial computing can take different forms.

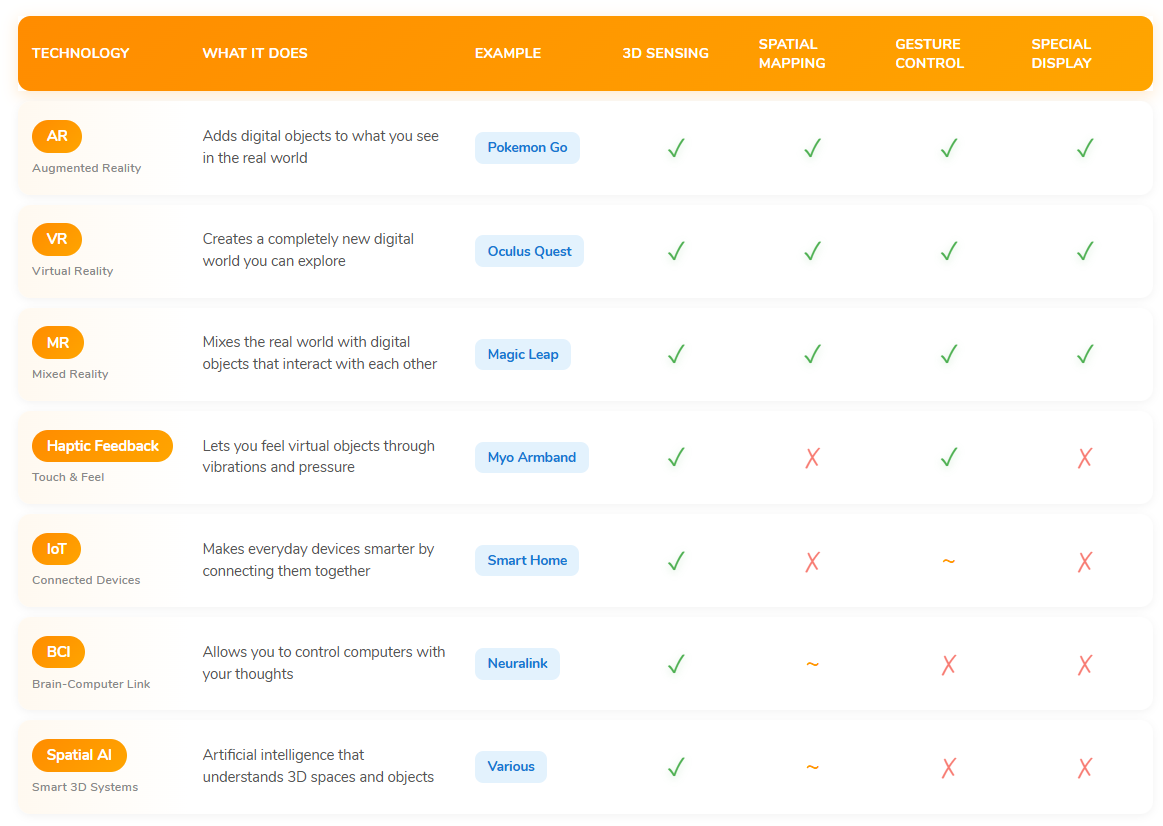

Various immersive technologies fall under Spatial Computing: Augmented Reality (AR), Virtual Reality (VR), Mixed Reality (MR), Projection Mapping, Holograms, and more. The field advances rapidly. Here's a quick overview to help distinguish them:

Note: Examples listed represent well-known applications, not necessarily the best classroom use cases. These technologies often overlap and can be combined for different learning objectives.

Each of these tools comes with important ethical considerations for student safety and wellbeing.

Minority Report came out over 20 years ago, and thankfully, we've moved beyond that vision in terms of what is pedagogically possible. With the rise of XR (AR + VR + MR) interactions, designing learning experiences that go beyond "tap, scroll, and pinch" becomes essential for engaging students' full cognitive potential—both mind and body.

As data engineer Wojciech Marusarz observes:

"A majority of our interaction with computers involves fingers and eyes, but some of the parts of our body like legs, arms and mouth are criminally underused—or not used for interaction at all. This is debilitating—pretty much like typing emails with just one finger."

Research supports this observation. Studies comparing passive interactions (clicking, swiping) with full-body engagement show that students using whole-body movements achieved significantly higher learning gains and better retention [ 3 ] . When students move their entire arm to manipulate a concept, rather than just tapping a screen, their brains process the learning more deeply.

While we're excited about technological progress, we're committed to introducing tools only when there's solid evidence of their educational viability and safety. Regular screens have already shown us how little we know about long-term impacts. We won't rush into cutting-edge solutions without proper evaluation.

Our approach prioritizes:

Our methods evolve as classroom needs emerge, always prioritizing ethical implementation and student wellbeing.

[ 1 ] Greenwold, S. (2003). Spatial Computing (MIT Media Lab Thesis). Massachusetts Institute of Technology.

[ 2 ] Johnson-Glenberg, M. C., Birchfield, D. A., Tolentino, L., & Koziupa, T. (2014). Collaborative embodied learning in mixed reality motion-capture environments: Two science studies. Journal of Educational Psychology, 106(1), 86-104.

[ 3 ] Billinghurst, M., & Kato, H. (2002). Collaborative augmented reality. Communications of the ACM, 45(7), 64-70.

[ 4 ] Goldin-Meadow, S. (2014). Gesture's role in speaking, learning, and creating language. Annual Review of Psychology, 65, 169-195.